How do you trust artificial intelligence, or AI, when it doesn’t know what it doesn’t know? How do you safely move computing systems trained in simulation into the real world, where mistakes carry real consequences?

In the School of Computing and Augmented Intelligence, part of the Ira A. Fulton Schools of Engineering at Arizona State University, those questions are the starting point for undergraduate research projects, where students tackle real-world problems as they reshape the future of computing.

That work earned national recognition this year, as four Fulton Schools undergraduate students received Honorable Mentions in the 2025–2026 Outstanding Undergraduate Researcher Awards from the Computing Research Association, or CRA. The program honors students across North America who show exceptional promise in computing research, prioritizing originality and impact.

Meet four students whose work spans statistical modeling, robotics, uncertainty-aware AI and sustainable computing, and who are already thinking like leading researchers.

Alec Fishbach: Why people stay or leave

Alec Fishbach, a junior in the computer science program, studies what happens beneath the surface of large organizations. Under the mentorship of Bing Si, a Fulton Schools associate professor of industrial engineering, Fishbach analyzed why people join, disengage from and recommit to professional communities. To do that, he turned to survey responses from more than 7,000 members of INFORMS, the world’s largest professional organization for operations research and management sciences, using the data to track patterns in how and why members stay engaged or drift away over time.

The challenge wasn’t coming up with a theory. It was making sense of the data itself. The surveys were complex, incomplete and full of overlapping information — the kind of messiness that reflects how people think and behave — but that can overwhelm traditional analytical tools.

To handle that complexity, Fishbach developed the process for cleaning and organizing the data and built the study’s main model, collaborating with Si to strengthen the accuracy of the results. The result was a clearer picture of what drives long-term participation and inclusion.

The technical payoff mattered. But for Fishbach, the deeper draw was the process itself.

“Research can be a great fit if you find yourself wanting to understand how and why things work beyond what is covered in class, enjoy exploring problems in depth and feel curious about questions that don’t have immediate or clear answers,” Fishbach says.

Khoa Vo: Teaching robots about the real world

Khoa Vo, a senior majoring in computer science, focuses on what happens when AI moves beyond simulation and encounters the physical world. In the Data Mining and Reinforcement Learning Lab, Vo worked under the supervision of Hua Wei, a Fulton Schools assistant professor of computer science and engineering, on sim-to-real transfer — one of robotics’ most persistent challenges.

Testing new traffic control ideas on real city streets isn’t an option. Mistakes could put people at risk. Instead, Vo helped build a physical testing setup that allowed researchers to safely study how autonomous systems behave before they ever reach the real world.

Working with both simulated environments and small robotic vehicles, Vo explored whether self-driving cars trained in simulation could perform reliably once they encountered real-world conditions. His work showed that learning from expert examples in simulation could meaningfully improve real-world behavior, helping robots stay in their lanes and avoid obstacles more effectively.

“My effort provided a user-friendly and comprehensive study, demonstrating the potential of simulation data in improving robot performance,” Vo says.

Owen Krueger: When AI should say “I don’t know”

Owen Krueger, a senior in the computer science program, is focused on a deceptively simple question: How can we tell when an AI system is correct and when it’s guessing?

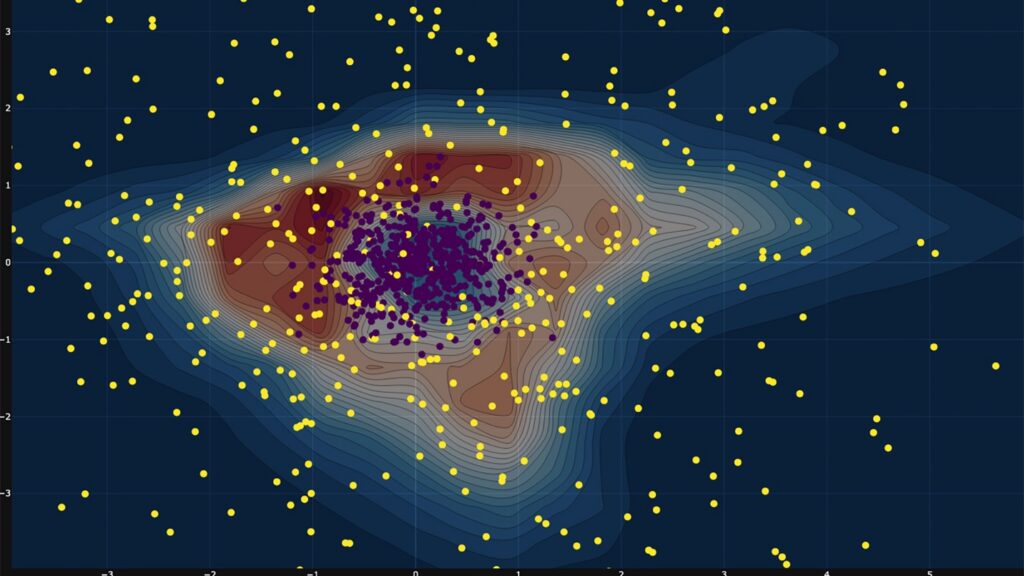

Working with Giulia Pedrielli, a Fulton Schools associate professor of industrial engineering, Krueger studies how AI systems represent uncertainty. In the real world, AI is often asked to make decisions using incomplete or unfamiliar information, but many systems struggle to signal when they’re operating outside their comfort zone.

To study that problem, Krueger created controlled test scenarios where the level of uncertainty was known in advance. This allowed him to closely observe how AI systems respond when information is missing, noisy or ambiguous, all conditions they’re likely to face outside the lab. He then explored new ways to curb overconfidence, testing whether AI systems could be trained to better recognize the limits of their own knowledge.

“If we don’t know when AI is outside familiar territory, we can’t responsibly deploy it in high-risk settings,” Krueger says.

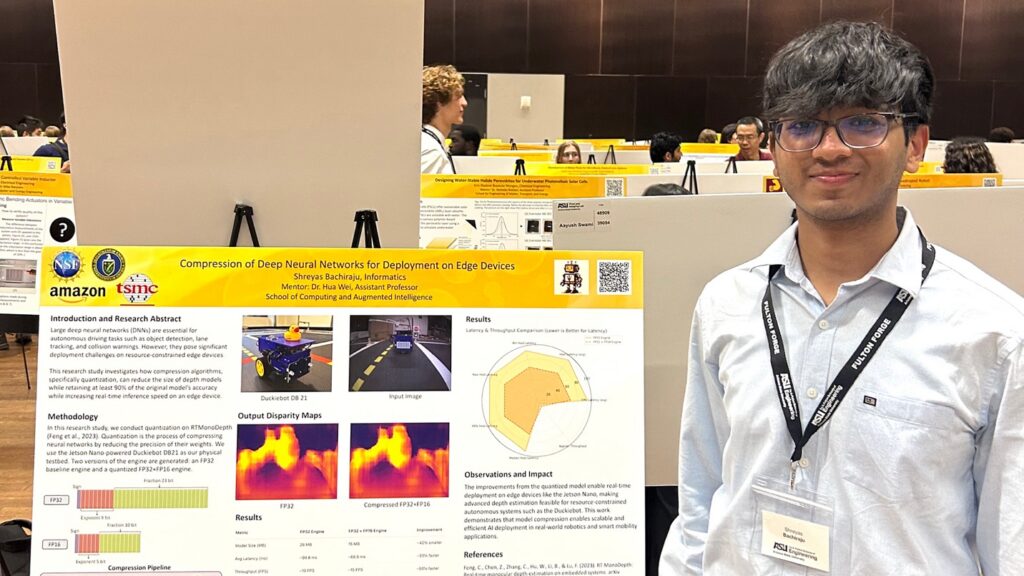

Shreyas Bachiraju: Where efficient AI meets urban sustainability

Shreyas Bachiraju, a senior majoring in informatics, is interested in a practical question that often gets overlooked in AI research: What happens when powerful computing systems meet real-world limits?

Like Vo, Bachiraju worked under the mentorship of Wei, studying how AI can be used to improve transportation systems without assuming unlimited computing power. His research explored how intelligent systems trained to analyze traffic or visual data can still function when they’re deployed on smaller, less powerful devices, such as traffic cameras at intersections, roadside sensors or compact computers embedded in vehicles.

Through one project, he redesigned an AI system so it could run faster and more efficiently on low-power hardware, without losing accuracy. In another, he examined why some large AI models consume so much energy and how they might be redesigned to use less.

“In urban and edge environments, constraints aren’t something you can ignore,” Bachiraju says. “They’re the design goal.”

Why undergraduate research matters

Having four students recognized by the CRA in the same year is a point of pride for ASU and the School of Computing and Augmented Intelligence.

That hands-on, exploratory approach to research is central to why the CRA Outstanding Undergraduate Researcher Awards exist, according to Nadya Bliss, executive director of the ASU Advanced Capabilities for National Security Institute and a former chair of the CRA Computing Community Consortium.

“Undergraduate research is where students first encounter the real nature of computing,” Bliss says. “They learn how to work through open-ended problems, to iterate, to fail and to adapt. That experience prepares them to take on the challenges of both today and tomorrow.”