In a courtroom, truth often hinges on storytelling. But when that story involves hex values, file systems, packet captures or metadata timestamps, even the most seasoned judge can struggle to follow the plot.

Imagine a public defender who can’t afford a digital forensics expert. Or a police officer trying to explain technical evidence clearly enough to secure a search warrant. Or a jury staring blankly as an expert witness describes how a crime unfolded inside a hard drive. As technology seeps into nearly every criminal case, justice increasingly depends on whether complex cyber evidence can be understood by nontechnical people.

Three students in the School of Computing and Augmented Intelligence, part of the Ira A. Fulton Schools of Engineering at Arizona State University, think artificial intelligence, or AI, might help.

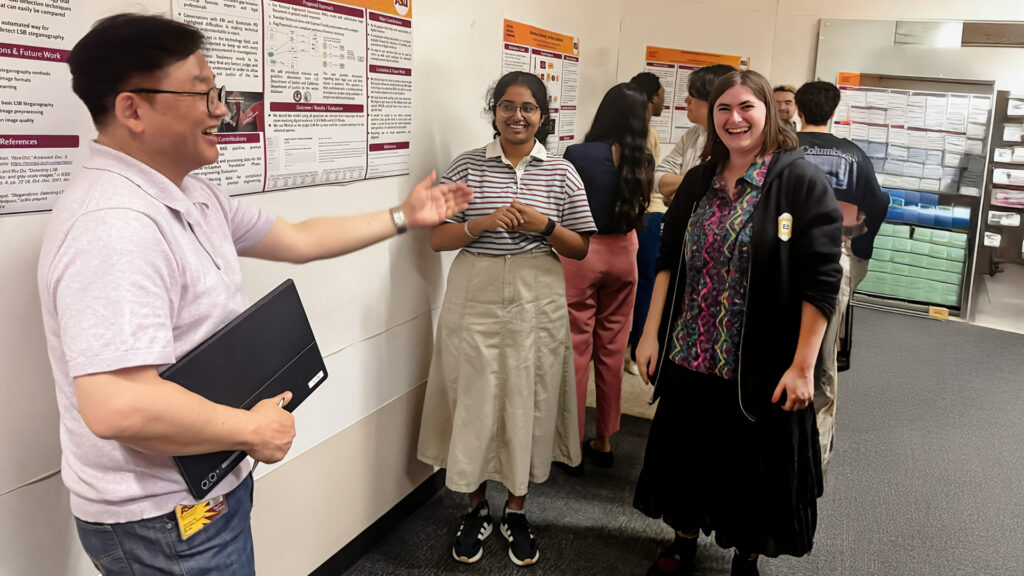

In CSE 598 Forensics Computing, a graduate-level course that blends cybersecurity, law and real-world investigation, Ariadne Dimarogona, Aditi Ganapathi and Easton Kelso built Legal Laysplainer, an AI-powered system designed to translate cyber forensics evidence into plain language that judges, lawyers, jurors and law enforcement officers can easily understand.

When technology takes the stand

“Cyber forensics are needed when a crime is committed and there’s technology involved,” says Kelso, a master’s student in cybersecurity who expects to graduate in May 2026.

That might mean a computer used as an attack surface, a phone that holds key location data or a server that quietly logged every step of a digital break-in. At its core, digital forensics pulls truth from these devices by reconstructing events from the data left behind.

Dimarogona is a Fulton Schools student finishing her master’s degree in computer science with a cybersecurity concentration as part of the CyberCorps: Scholarship for Service program. She says that in an era when nearly every crime leaves a digital trail, cyber forensics are no longer niche.

“Technology shows up in almost every case now,” Dimarogona says. “Even crimes that aren’t considered ‘cyber’ often rely on digital evidence.”

The problem, the students quickly learned, isn’t just collecting digital evidence. It’s explaining it.

During the semester, the class heard directly from experts on the front lines: agents from the Federal Bureau of Investigation’s Phoenix division and officers from the Scottsdale Police Department. Those professionals told students that expert testimony is now routine — and routinely difficult.

“The officers told us that they’re brought in to do expert testimony quite a lot,” Kelso says. “But judges, lawyers and jurors all have their own jobs. It’s not their role to have a computer scientist’s understanding of how technology works.”

To bridge the gap, the experts told the students that they often rely on metaphors, comparing deleted files to footprints in the sand or data packets to envelopes in the mail. But that kind of explanation takes time, experience and access to trained specialists, and not every case has that luxury.

“That’s when we started asking how we could combine that need with tools like large language models and AI to make these explanations easier to understand,” Kelso says.

The result was Legal Laysplainer.

AI under oath

The students built what’s known as a retrieval-augmented generation, or RAG, system on top of an existing large language model similar to ChatGPT. In plain terms, that means the AI doesn’t just generate answers from its training data. It first retrieves information from a curated library of vetted legal and forensic documents, then builds its explanation from those sources. The model is still powerful, but it operates within boundaries.

“People are skeptical of AI and rightly so,” says Ganapathi, a Fulton Schools doctoral student in computer science researching human factors in cybersecurity. “We grounded our system in U.S. Department of Justice and National Institute of Justice documentation and prior case materials so it isn’t just making things up. We wanted to build something that experts could trust.”

The system is designed primarily for digital forensics experts, but the students see broader applications. Lawyers could use it to understand evidence when an expert isn’t available. Judges could reference it when reviewing warrants. Police officers could tap it to better articulate why technical details matter in an investigation.

Scottsdale police officers, the students learned, sometimes struggle to justify warrants simply because the technical language doesn’t land.

“A tool like this would try to explain to the judge why the warrant is important,” Ganapathi says.

To evaluate their system, the team even turned AI on itself. A second language model scores Legal Laysplainer’s explanations, checking whether answers are stable, readable for nontechnical users and faithful to source material. Next, the students hope to run human-subject studies to assess usability.

Learning on the record

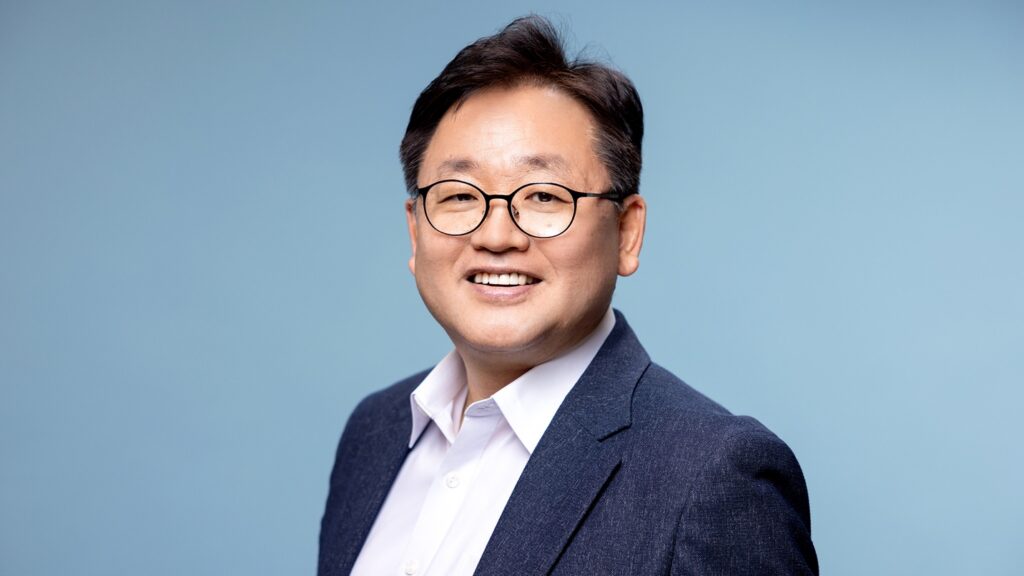

The project is a snapshot of how the Fulton Schools trains the next generation of cybersecurity professionals through hands-on, interdisciplinary work with real consequences. The course is part of broader efforts led by Gail-Joon Ahn, a globally recognized cybersecurity innovator and professor in the School of Computing and Augmented Intelligence. He hopes to make cyber forensics an important and permanent part of the school’s curriculum.

“My goal is to ensure students go beyond theory and engage with realistic, hands-on security challenges that mirror real-world environments,” Ahn says. “It’s been incredibly rewarding to see them tackle meaningful forensic and AI-driven cybercrime problems.”

This type of training matters. The global cybersecurity workforce shortage continues, with millions of unfilled positions worldwide. Projects like Legal Laysplainer push students beyond theory, challenging them to design tools that could operate in the real world, not just in a classroom lab.

For the students, the work is far from over. Ganapathi plans to complete her doctoral studies, continuing research efforts with Jaron Mink, a Fulton Schools assistant professor of computer science and engineering, to make cybersecurity tools more usable and trustworthy through machine learning. Kelso is weighing a move into industry against further graduate study. Dimarogona will soon step into a government cybersecurity role, bringing her courtroom-minded perspective with her.

All three hope Legal Laysplainer will eventually be tested by the very experts who inspired it.

“If this could actually be used in courtrooms, that would be amazing,” Kelso says.

In a justice system increasingly shaped by digital traces, clarity may be just as important as code, and the difference between understanding and confusion could be the right explanation at the right moment.